Researchers have reached a critical milestone in the development of photonic quantum computers, demonstrating a new method to prevent errors before they occur. By utilizing a technique known as photon distillation, scientists have shown that it is possible to mitigate “noise” in light-based systems, clearing a major hurdle toward building large-scale, fault-tolerant quantum computers.

The Photonic Advantage and Its Achilles’ Heel

To understand this breakthrough, one must first understand the fundamental difference between the two leading types of quantum computing:

- Superconducting Quantum Computers: These use electronic circuits to create qubits. While powerful, they generate significant heat and require extreme cooling to near absolute zero to function.

- Photonic Quantum Computers: These use particles of light (photons ) as qubits. Because photons are in constant motion, they generate very little excess heat, allowing these systems to potentially operate at room temperature.

However, this mobility is a double-edged sword. Because photons move at the speed of light and interact through complex optical paths (mirrors and beam splitters), they are incredibly “brittle.” In a photonic system, errors often stem from “rogue photons” —particles that fail to interact correctly with others, effectively drifting through the system as useless noise.

The Challenge: Errors Before Computation

In most quantum systems, error correction happens after a mistake has been made. This is problematic for light-based systems because the errors often occur before the photon is even processed as a qubit.

As Jelmer Renema, chief scientist at QuiX Quantum, explains, photonic computing is inherently probabilistic. When researchers manipulate light, they are essentially managing probabilities. Without a way to filter out the “bad” photons, the likelihood of a successful computation drops every time you add more components to the system. In traditional scaling, adding more qubits often introduces more errors than it solves, creating a mathematical wall that prevents computers from getting larger or more useful.

The Solution: Photon Distillation

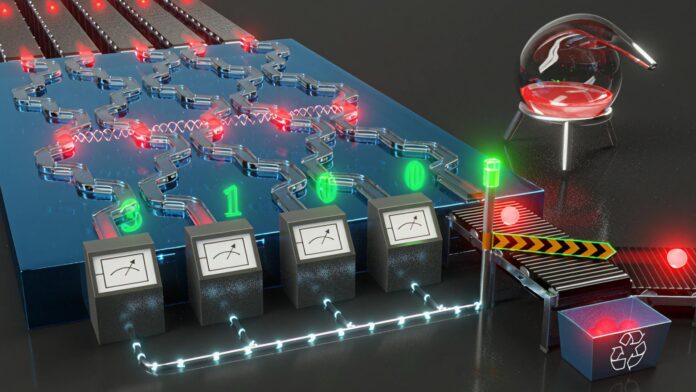

The breakthrough detailed in a recent study involves a process called quantum photonic distillation. Instead of trying to fix a broken calculation, this method acts as a high-tech filter.

How it works:

- Quantum Interference: The system uses specialized optical circuits to exploit “quantum interference”—a phenomenon where the probabilities of different quantum states combine.

- Filtering “Rogue” Photons: The circuit is designed so that the probability of a “rogue” photon reaching the output is significantly lower than the probability of a “good” photon passing through.

- Net-Positive Scaling: This process produces high-quality photons before they are used for computation.

The most significant finding is that this technique achieves “below-threshold error mitigation.” This means that as the system scales up and becomes more complex, the distillation process reduces the error rate more effectively than the new components increase it.

Why This Matters for the Future

While companies like Google have achieved similar “below-threshold” milestones with superconducting processors, this marks the first time such a feat has been accomplished in a light-based system.

The implications are profound: if researchers can maintain high-quality qubits without the massive “overhead” (the enormous amount of extra hardware usually required to correct errors), the cost and complexity of building a universal quantum computer will drop significantly. This moves photonic computing from a theoretical possibility toward a viable, scalable technology capable of outperforming today’s most powerful supercomputers.

This breakthrough demonstrates that we can move past the “probabilistic” nature of light to create a predictable, scalable architecture for the next generation of computing.

Conclusion

By filtering out errors at the source through photon distillation, scientists have provided a roadmap for scaling light-based quantum computers. This development suggests that room-temperature, high-performance quantum computing may be much closer to reality than previously thought.